Understanding Prompts in Modern AI Systems

In artificial intelligence, a prompt is the instruction or input given to a model to shape its response. Prompts can be as short as a single sentence or as detailed as a structured specification containing constraints, examples, and formatting rules. As large language models become more powerful, prompt design has shifted from casual experimentation to a discipline that directly affects reliability, consistency, and business outcomes.

Modern models from organizations like OpenAI and Google DeepMind demonstrate remarkable capabilities, yet they remain highly sensitive to ambiguity. The difference between a vague instruction and a precise one often determines whether the output is merely plausible or genuinely useful.

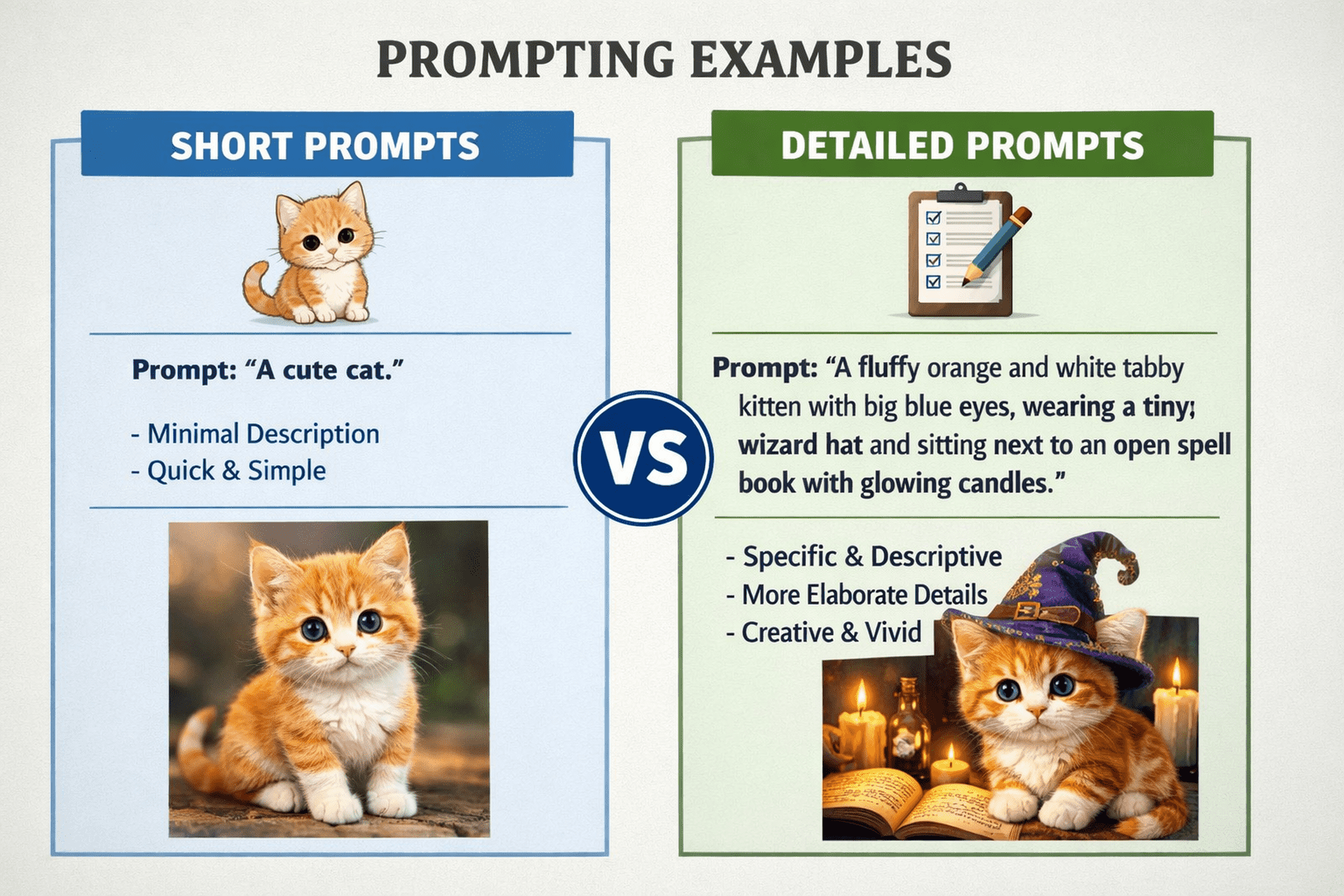

Short Prompts vs Detailed Prompts

What Are Short Prompts?

Short prompts are concise requests, typically one or two sentences long, that rely on the model’s ability to infer context. They are fast to write and ideal for exploratory interactions.

Example

“Summarize the benefits of remote work.”

These prompts work well when users prioritize speed, creativity, or broad insights rather than strict control.

What Are Detailed Prompts?

Detailed prompts include additional context, constraints, tone guidance, and sometimes examples. They reduce interpretive freedom and guide the model toward predictable results.

Example

“Summarize the benefits of remote work for mid-sized technology companies. Focus on productivity, retention, and cost efficiency. Use bullet points and a professional tone.”

Detailed prompts trade brevity for precision, which becomes critical when outputs must meet defined standards.

How Short Prompts Perform in Practice

Short prompts excel in ideation and rapid experimentation. In marketing and creative workflows, they allow users to test multiple ideas quickly with minimal effort. Teams brainstorming headlines, social media posts, or conceptual angles often benefit from the speed and flexibility short prompts provide.

However, this efficiency comes with variability. Because short prompts leave room for interpretation, responses may differ across runs in tone, structure, and depth. While this variability can be useful in creative contexts, it becomes problematic in environments requiring consistency, such as legal, financial, or technical documentation.

Short prompts are most effective when:

- Exploration and speed matter more than precision

- Output diversity is desirable

- Minor inconsistencies are acceptable

Why Detailed Prompts Improve Reliability

Detailed prompts shine when accuracy, structure, and repeatability are essential. By explicitly defining audience, format, and constraints, users reduce ambiguity and guide the model toward outputs aligned with real-world needs.

For example, instructional designers generating assessments or learning materials often use detailed prompts to control cognitive level, tone, and instructional intent. Similarly, developers requesting code generation or debugging assistance benefit from clearly defined requirements.

Guidance from OpenAI consistently emphasizes that explicit instructions improve model performance on complex tasks. Academic research from institutions such as the Stanford Human-Centered AI Institute reinforces this idea, showing that structured input leads to more dependable system behavior.

Detailed prompts are most effective when:

- Output consistency is critical

- Tasks involve technical or regulated content

- Revisions are costly or time-sensitive

Comparing Prompt Strategies

Short and detailed prompts serve different purposes rather than competing directly. The choice depends on reliability requirements, task complexity, and workflow stage.

Short prompts favor efficiency and flexibility but introduce greater variability. Detailed prompts demand more upfront effort yet typically reduce downstream corrections and rework.

The practical question is not which style is superior, but when each style is appropriate.

Prompt Optimization Techniques That Work Across Styles

Rather than relying solely on prompt length, experienced practitioners apply prompt optimization techniques to improve output quality regardless of verbosity.

Role Assignment

Defining the model’s perspective narrows its reasoning space.

Example

“Act as a cybersecurity analyst.”

Constraint Specification

Explicit constraints reduce unwanted variation.

Example

“Limit the response to five bullet points.”

Few-Shot Examples

Providing examples clarifies expectations and patterns.

Example

“Here is a sample format to follow.”

Iterative Refinement

Effective prompting is rarely achieved in one attempt. Users evaluate outputs and refine instructions incrementally.

These techniques often matter more than prompt length itself. A concise prompt with clear constraints can outperform a lengthy but unfocused instruction set.

Example: Prompt Evolution in a Workflow

Prompting commonly follows an iterative path.

Initial prompt

“Write a blog post about data privacy.”

Refined prompt

“Write a 1,000-word blog post about data privacy for small business owners. Explain GDPR and CCPA at a high level, include practical examples, and maintain a formal tone with clear headings.”

The second version introduces audience targeting, scope definition, and stylistic guidance. This added structure typically yields more usable results with fewer revisions.

Industry-Specific Prompting Patterns

Different domains favor different prompting strategies.

Education

Detailed prompts help control learning objectives, complexity, and pedagogical framing.

Marketing

Short prompts encourage creative exploration, while detailed prompts finalize messaging and tone.

Software Development

Detailed prompts support debugging, refactoring, and documentation where precision is essential.

Customer Support

Structured prompts improve response consistency and reduce policy deviations.

Expert Perspectives on Prompting

Researchers and practitioners increasingly describe prompting as a skill rather than a shortcut. Ethan Mollick of the Wharton School frequently emphasizes that effective prompting reflects clear thinking and intentional design.

Evidence across technical documentation, usability studies, and research repositories such as the ACM Digital Library supports a consistent conclusion: clarity and specificity are stronger predictors of output quality than prompt length alone.

Practical Takeaways for Improving AI Outputs

Use short prompts during exploration, ideation, and experimentation.

Adopt detailed prompts for production, compliance, and technical tasks.

Apply prompt optimization techniques to reduce ambiguity.

Iterate deliberately rather than expecting perfection immediately.

Document successful prompts as reusable templates.

Continuously validate outputs against trusted references.

Conclusion

The short-versus-detailed prompt debate is ultimately about intent and timing. Short prompts enable rapid discovery and creative divergence. Detailed prompts provide control, consistency, and reliability when outcomes carry greater consequences.

As AI systems evolve toward more autonomous and agentic behavior, the ability to communicate intent precisely becomes a strategic advantage. Well-designed prompts do more than improve model responses; they sharpen problem definition, clarify objectives, and streamline decision-making.

More Articles

What Is a Content Strategy Framework and How Does It Guide Better Decisions

How to Boost ROI with AIO Tools for Smarter Business Decisions

A Practical Roadmap to Improve Visibility in AI Search Results

10 Ways AI is Revolutionizing Data Analytics for Better Decision-Making

Ethical AI Marketing Checklist to Build Trust While Protecting Customer Data

FAQs

Are short prompts actually good enough for AI tools?

Short prompts can work well for simple, common tasks like summarizing text or generating basic ideas. They’re fast and flexible. They leave more room for the AI to guess what you want, which can reduce consistency.

When do detailed prompts work better?

Detailed prompts are better when you need accuracy, a specific tone, or structured output. By clearly stating context, constraints and expectations, you reduce ambiguity and get more reliable results.

Do longer prompts always mean better results?

Not always. Extra detail helps only if it’s relevant. Overloading a prompt with unnecessary instructions can confuse the model or dilute the main goal.

Which is more reliable: short or detailed prompts?

Detailed prompts are generally more reliable, especially for complex tasks. Short prompts are fine for quick experimentation but they tend to produce more variable outputs.

How short is too short for a prompt?

If a prompt doesn’t clearly state the task, the audience, or the desired format, it’s probably too short. The AI may still respond but the result may not match your intent.

Can I start short and then add details?

Yes. This is often a smart approach. Start with a short prompt, review the output, then refine it with more details to improve accuracy and alignment.

What’s the best balance between short and detailed prompts?

The best balance is being clear without being wordy. Include just enough context and constraints to guide the AI, while avoiding instructions that don’t directly affect the outcome.