The Challenge of Cloud Jargon in Modern Content Strategy

In the race to deliver hyper-personalized, globally distributed digital experiences, content teams often find themselves navigating a maze of technical terminology. Cloud concepts, while powerful, can feel abstract and disconnected from day-to-day content goals. Yet understanding them is no longer optional. When strategists grasp how cloud infrastructure shapes performance, scalability, and personalization, technical decisions become strategic advantages rather than obstacles.

Modern delivery mechanisms have evolved far beyond simple hosting and caching. Technologies like edge computing, serverless functions, and containerized microservices directly influence user experience, latency, and operational efficiency. Translating this jargon into practical insight allows content leaders to align platform capabilities with audience expectations.

Content Delivery Networks: Accelerating Global Experiences

A Content Delivery Network (CDN) is more than a caching layer. It is a distributed system of edge servers designed to deliver assets from locations geographically close to users. By shortening the physical distance data must travel, CDNs dramatically reduce latency, a critical factor for both engagement and search performance.

When a user requests an image, script, or video, the CDN routes the request to the nearest edge location. If the asset is cached, delivery is nearly instantaneous. If not, the edge server retrieves it from the origin, stores it, and serves it. Smart cache-control policies govern this process, ensuring freshness without unnecessary origin load.

For content-heavy platforms, the impact is measurable. Even small reductions in Largest Contentful Paint (LCP) can improve perceived speed and retention. However, CDNs introduce trade-offs. Dynamic or personalized content complicates caching, and inefficient invalidation strategies can overload origin servers or serve stale assets. Advanced implementations increasingly combine CDNs with edge logic to handle personalization closer to the user.

Object Storage: Scalable Foundations for Media-Rich Platforms

Object storage systems provide the backbone for modern content repositories. Unlike traditional file systems, object storage treats each asset as an independent object containing data, a unique identifier, and metadata. This architecture enables virtually unlimited scalability and high durability, making it ideal for images, videos, and documents.

Because objects are accessed via simple HTTP operations, integration with applications and CDNs is straightforward. Storage costs remain low relative to block-based alternatives, and performance scales with demand. Content teams benefit from simplified management and reliable availability.

Still, object storage is not suited to every workload. Modifying a single portion of an object requires rewriting the entire asset, and retrieval delays or fees may apply to archival tiers. Strategic tiering based on access patterns becomes essential for balancing cost and responsiveness.

Serverless Functions: Event-Driven Content Automation

Serverless computing introduces a model where code runs in response to events without persistent server management. Functions remain idle until triggered, enabling highly efficient resource usage. For content workflows, this unlocks powerful automation possibilities.

Common scenarios include image processing, metadata extraction, and content transformation. A single upload event can initiate resizing, tagging, and indexing operations automatically. Billing reflects only execution time, making serverless ideal for intermittent or bursty workloads.

However, serverless architectures demand awareness of constraints. Cold starts, runtime limitations, and distributed debugging challenges require careful design. While these factors may be negligible for asynchronous tasks, they become significant for latency-sensitive interactions.

Headless CMS and APIs: Decoupling Content from Presentation

Headless CMS platforms separate content management from presentation. Content is stored centrally and delivered as structured data via APIs, allowing any frontend or channel to consume it. This decoupling enables true omnichannel publishing, where a single content source powers websites, apps, and emerging interfaces.

API-first design improves flexibility and performance. GraphQL, for example, allows clients to request precisely the data they need, minimizing unnecessary payloads and network overhead. This efficiency becomes increasingly valuable as content ecosystems grow more complex.

Yet headless systems introduce operational considerations. Frontend development complexity rises, governance becomes more critical, and API management requires discipline. Without strong coordination, fragmentation can erode the benefits of decoupling.

Containerization and Orchestration: Consistent, Scalable Deployments

Containerization packages applications and dependencies into isolated units, ensuring consistent behavior across environments. This approach eliminates many traditional deployment inconsistencies and simplifies scaling.

Orchestration platforms automate container lifecycle management, enabling rapid scaling, self-healing, and rolling updates. Content platforms benefit from resilience and predictable performance during traffic spikes or feature releases.

The trade-off lies in complexity. Distributed systems, stateful components, and operational overhead demand expertise. Smaller teams must evaluate whether the scalability gains justify the learning curve and maintenance requirements.

Infrastructure as Code: Reliable, Repeatable Environments

Infrastructure as Code (IaC) shifts environment management from manual configuration to declarative definitions. Cloud resources become version-controlled artifacts, reducing human error and enabling rapid environment replication.

For content operations, IaC supports consistent staging, testing, and disaster recovery strategies. Entire platforms can be recreated or modified through controlled updates, improving reliability and auditability.

However, IaC requires disciplined state management and validation processes. Misconfigurations can propagate quickly, underscoring the need for review and safeguards.

Managed Databases: Supporting Dynamic Content at Scale

Managed database services abstract operational complexity, handling backups, scaling, and failover automatically. These systems underpin dynamic content delivery, personalization, and analytics.

Relational and NoSQL options serve different needs. Structured content models, user profiles, and high-throughput interactions each benefit from distinct database paradigms. Selecting the right model shapes both performance and cost efficiency.

Despite their convenience, managed databases still require thoughtful optimization. Query performance, indexing strategies, and regional replication choices remain strategic decisions.

Cloud-Native Architecture: Designing for Agility and Resilience

Cloud-native design embraces modularity, automation, and distributed scalability. Microservices, APIs, containers, and serverless functions combine to create platforms that evolve rapidly and recover gracefully from failure.

This paradigm enables continuous improvement and elastic scaling, but it also increases architectural complexity. Observability, monitoring, and consistency mechanisms become essential components rather than optional enhancements.

Edge Computing: Reducing Latency Through Proximity

Edge computing extends processing capabilities closer to users, allowing dynamic logic to execute without full origin round-trips. For personalization, experimentation, and real-time interactions, this proximity yields significant performance gains.

The challenge lies in synchronization, security, and debugging. Distributed execution environments demand robust data strategies and monitoring practices.

Conclusion: From Terminology to Tactical Advantage

Mastering cloud concepts is less about memorizing definitions and more about understanding their practical implications. When strategists connect infrastructure choices to user experience, latency, scalability, and cost, cloud jargon transforms into actionable insight.

Hands-on experimentation, measurement, and iteration remain the most effective learning tools. Observing how architectural decisions influence performance and reliability builds intuition that no glossary can replace. As cloud technologies continue to evolve, continuous exploration ensures that content strategies remain both technically informed and competitively agile.

More Articles

Public Cloud Versus Private Cloud Which Option Suits Your Business Best

How to Design a Resilient Serverless Architecture for Reliable Applications

How to Streamline Your IT Workflows for Maximum Business Efficiency

How to Back Up Your essential Computer Files Safely and Reliably

FAQs

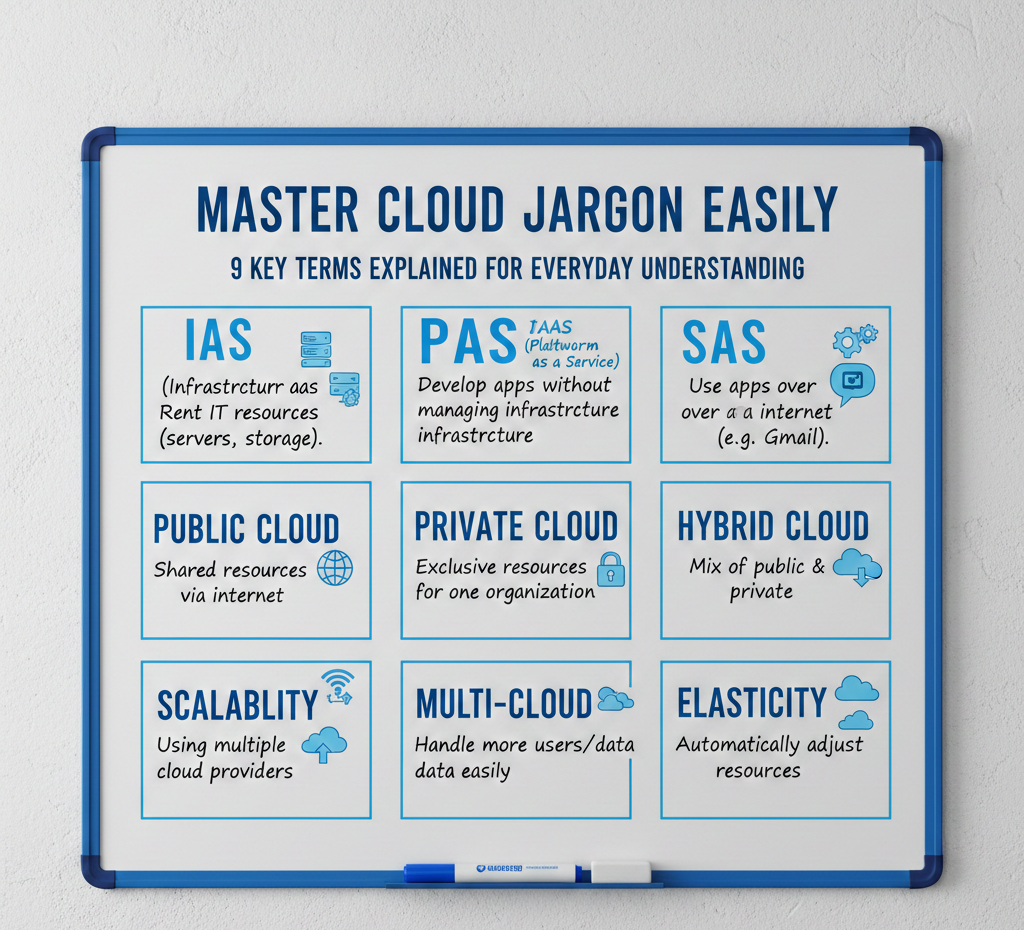

What’s the main idea behind ‘Master Cloud Jargon Easily’?

This guide is designed to cut through the confusing language of cloud computing. We break down 9 essential cloud terms into simple, everyday explanations so you can finally interpret what people are talking about without needing a tech dictionary.

Why bother learning these cloud terms?

Understanding cloud jargon isn’t just for IT pros anymore. It helps you make better decisions for your business or personal tech, communicate more effectively with tech teams. generally feel less lost when cloud topics come up in conversations or news.

What kind of cloud terms can I expect to learn?

We focus on the most commonly used but often misunderstood terms. Think foundational concepts that frequently pop up in discussions about cloud services, storage. infrastructure – the ones that are key to grasping the basics.

Is this guide only for tech beginners?

Not at all! While it’s perfect for anyone new to cloud concepts, even those with some tech background can benefit from these simplified explanations. Our goal is clarity and practical understanding for everyone, regardless of their current expertise level.

How does this guide make complex terms easy to grasp?

We use straightforward language, relatable analogies. real-world examples to demystify each term. The focus is on ‘what it means for you’ rather than deep technical specifications, helping you grasp the core concept quickly.

After reading this, will I be a cloud architect?

While you’ll definitely gain a much clearer understanding of key cloud concepts, this guide isn’t meant to turn you into a certified cloud architect overnight. It’s a fantastic starting point to build your foundational knowledge and confidence, preparing you for deeper dives if you choose.

What if I’m still confused after going through the explanations?

Our aim is maximum clarity! If something still doesn’t click, we encourage you to re-read the explanation or even do a quick search on the specific term. The guide is designed to be a solid foundation. sometimes a little extra context from another source can really cement your understanding.