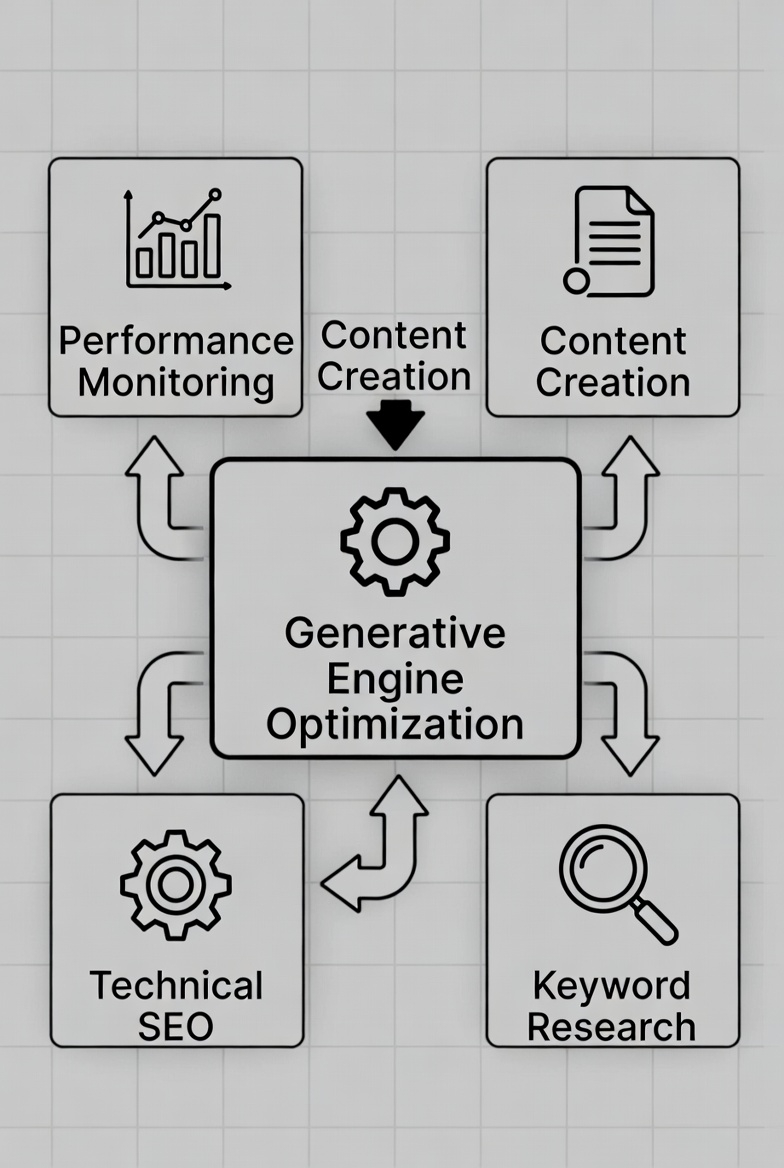

Achieving High-Fidelity Generative Engine Optimization (GEO)

Generative Engine Optimization (GEO) is no longer about clever prompts alone. Sustainable performance in AI-driven discovery systems requires disciplined generative content practices that emphasize factual grounding, semantic coherence, and ethical integrity. As large language models (LLMs) increasingly mediate how users access information, content must be engineered not just for readability, but for machine interpretability, verifiability, and trust. This shift demands attention to model biases, hallucination control, retrieval-augmented generation (RAG), and transparent data provenance. High-performing generative content is therefore a product of architecture, evaluation rigor, and governance – not mere generation.

Architecting Ethical AI with Content Provenance

Content provenance has become foundational to trustworthy generative ecosystems. Simple disclosure labels are insufficient; robust provenance establishes a verifiable lineage describing model origin, prompts, transformations, and human oversight. Such traceability allows downstream systems to assess reliability, attribute responsibility, and combat misinformation.

Modern provenance frameworks often combine cryptographic hashing, metadata manifests, and digital watermarking. When content is generated, a unique hash and generation metadata – model identifiers, timestamps, prompt references – can be embedded directly or recorded in immutable registries. Emerging standards like C2PA enable tamper-resistant manifests that persist across platforms. Implementations from initiatives such as the Adobe Content Authenticity Initiative demonstrate that embedding provenance typically adds minimal overhead while significantly strengthening trust signals.

However, provenance design involves pragmatic trade-offs. Capturing every intermediate state may introduce latency and storage burdens, especially at scale. Many production systems therefore record provenance at critical checkpoints or final publication stages. The objective is not maximal logging, but meaningful verification that aligns with performance constraints and content risk levels.

Detecting and Mitigating Algorithmic Bias

Bias remains one of the most persistent challenges in generative AI. Because LLMs inherit statistical imbalances from training data, outputs may unintentionally reinforce stereotypes or distort representation. Left unchecked, biased content degrades user trust and may trigger adverse ranking effects in AI-mediated environments.

Effective bias mitigation requires layered defenses. At the input stage, prompts can explicitly encourage diversity or neutrality, reducing skewed output distributions. Post-generation, bias detection models and statistical audits evaluate representational patterns. Techniques inspired by methods like Word Embedding Association Tests (WEAT) quantify latent associations, while computer vision systems assess demographic representation in images.

Mitigation strategies vary by architecture. Training-time interventions include data re-weighting, augmentation, and adversarial debiasing. Inference-time controls may involve constrained decoding or embedding adjustments that steer models away from problematic correlations. Yet aggressive debiasing can affect fluency or specificity, producing overly generic outputs. Teams must therefore manage a “bias-quality balance,” ensuring ethical safeguards without eroding relevance or expressiveness.

Frameworks such as IBM AI Fairness 360 illustrate how bias metrics can be monitored continuously. The most resilient systems treat bias mitigation not as a one-time correction but as an iterative evaluation cycle.

Optimizing for Generative Discoverability

GEO differs fundamentally from traditional SEO. Instead of optimizing for keyword matching, GEO prioritizes semantic clarity, entity relationships, and contextual structure – features that generative engines rely on when synthesizing responses.

High-visibility generative content exhibits strong semantic density. Concepts are defined explicitly, relationships are logically organized, and ambiguity is minimized. Structured data, including schema markup and machine-readable annotations, strengthens entity resolution and retrieval accuracy. Content designed for generative systems often benefits from modular layouts: definitions, Q&A segments, and clearly delineated conceptual blocks.

Testing content directly against generative models provides actionable diagnostics. Summarization accuracy, fact extraction performance, and coherence evaluations reveal whether key insights are preserved during synthesis. Low extraction reliability frequently signals structural or semantic weaknesses rather than model failure. GEO optimization thus becomes a feedback-driven refinement process focused on machine comprehension as much as human engagement.

Ensuring Factual Accuracy and Coherence

Content quality in generative environments is inseparable from factual integrity. Hallucinated or inconsistent outputs rapidly undermine credibility, and generative engines increasingly penalize unreliable material. Preventing such degradation requires grounding mechanisms that anchor generation to validated knowledge sources.

Retrieval-Augmented Generation (RAG) architectures address this challenge by injecting verified external context into model inference. Instead of relying solely on internal model memory, RAG systems retrieve relevant passages from curated corpora or trusted databases, constraining generation within factual boundaries. Empirical studies consistently show reductions in hallucination frequency and improvements in answer reliability when grounding is applied.

Coherence and linguistic precision benefit from post-processing pipelines. Tools such as LanguageTool or spaCy enable automated grammar checks, dependency analysis, and coreference validation. Fine-tuning models on domain-specific, high-quality datasets further stabilizes narrative flow and stylistic consistency. Excessive correction, however, risks flattening nuance or creativity. Optimal pipelines balance automated validation with preservation of expressive variation.

Designing Human-AI Collaboration

Pure automation rarely yields optimal results, particularly for nuanced or high-stakes content. Human-AI collaboration introduces corrective intelligence, contextual judgment, and ethical sensitivity that models alone cannot guarantee.

Collaborative workflows typically involve iterative refinement loops. AI systems generate drafts or variations, while human editors evaluate factual accuracy, tone, and alignment with objectives. Human feedback also functions as a learning signal, informing model adjustments and improving future outputs. Empirical evidence from hybrid content pipelines suggests meaningful reductions in error rates alongside substantial gains in production efficiency.

Oversight mechanisms are equally critical. Automated monitoring systems flag anomalous outputs or sensitive content patterns for human review. Editorial checkpoints and accountability structures ensure that human involvement is systematic rather than symbolic. While human intervention introduces latency, it significantly enhances reliability, trust, and reputational safety.

Transparency, Usage Policies, and Consent

Transparency in AI usage is both an ethical obligation and a strategic advantage. Users increasingly expect clarity regarding AI involvement, data collection, and personalization mechanisms. Opaque practices erode trust and may trigger regulatory consequences.

Effective transparency includes explicit disclosures and accessible usage policies. Consent management systems capture user preferences, governing how prompts or behavioral data may be utilized. Regulations such as GDPR and CCPA exemplify frameworks that prioritize user autonomy and data protection.

Architecturally, consent signals must integrate with data governance pipelines, ensuring collected information is processed only within approved boundaries. Restrictive policies may limit model improvement opportunities, yet permissive or ambiguous practices introduce legal and reputational risks. Sustainable GEO strategies treat transparency not as compliance overhead but as a core trust-building mechanism.

Managing Intellectual Property and Copyright Risks

Intellectual property (IP) considerations remain complex in generative AI. Training data composition, output similarity, and ownership attribution all carry legal implications. Failure to manage IP risks can disrupt content deployment and compromise GEO outcomes.

Responsible practices begin with dataset governance. Training or fine-tuning corpora should consist of licensed, public domain, or proprietary materials with documented usage rights. Similarity detection mechanisms compare generated outputs against protected works, flagging potential conflicts before publication. While filtering improves compliance, excessive constraints may reduce creative flexibility.

Legal ambiguity surrounding AI-generated ownership necessitates internal policy clarity. Organizations should define rights, usage boundaries, and attribution models proactively. Regular audits, legal review, and traceable generation records collectively strengthen defensibility and operational stability.

Conclusion

Ethical, high-performing generative content emerges from deliberate system design rather than isolated generation tactics. Provenance tracking, bias mitigation, semantic structuring, factual grounding, and human oversight collectively define resilient GEO strategies. Metrics such as fluency or similarity scores are valuable, but long-term impact hinges on credibility, transparency, and user trust. As generative engines increasingly shape digital discovery, responsible content engineering becomes not merely advantageous but essential.

More Articles

Elevate Data Insights How to Advance Your Analytics Maturity

How to Streamline Your IT Workflows for Maximum Business Efficiency

The Complete Roadmap to Building a Thriving Content Marketing Strategy

Your Step-by-Step Roadmap to Building a Winning Content Strategy

Your Essential Checklist for Choosing the Right CMS Platform

FAQs

What’s this ‘Ultimate Checklist’ all about?

It’s your go-to guide for creating content using AI tools responsibly and effectively. Think of it as a step-by-step resource to ensure your generative AI content is not only high-quality but also ethical, fair. impactful.

Why should I bother with ethics when making AI content?

Great question! Ignoring ethical considerations can lead to big problems like spreading misinformation, unintentional bias, or even legal complications. Being ethical builds trust with your audience, protects your reputation. ensures your content is responsible and doesn’t cause harm.

How can I make sure my AI-generated content is actually good and useful?

It’s more than just hitting ‘generate’! The checklist emphasizes giving clear, specific prompts, thoroughly fact-checking AI output, adding your own human touch for nuance and accuracy. refining until the content truly meets your goals. Quality and utility are key.

What are some common traps to watch out for with generative AI?

Plenty! Things like AI ‘hallucinating’ false details, unintentional bias creeping into the text, potential copyright issues if you’re not careful, or simply producing bland, generic content. The checklist helps you identify and navigate these common pitfalls.

Does the checklist offer advice on avoiding bias in AI output?

Absolutely! Addressing bias is a huge component of ethical content creation. The checklist guides you on how to critically evaluate AI output for fairness, consider diverse perspectives. actively work to minimize stereotypes and discriminatory language in your content.

Is this checklist only for big companies, or can individuals and small teams use it too?

Nope, it’s for everyone! Whether you’re a student, a freelancer, a small business owner, or part of a large corporation, anyone using generative AI for content creation will find practical steps and actionable advice to improve their process and output.

What’s the biggest benefit I’ll get from using this checklist?

The main benefit is confidence and peace of mind. You’ll gain assurance that your AI-generated content is not only effective and engaging but also ethically sound, responsible. trustworthy. It helps you unlock AI’s potential without falling into common traps or compromising your values.