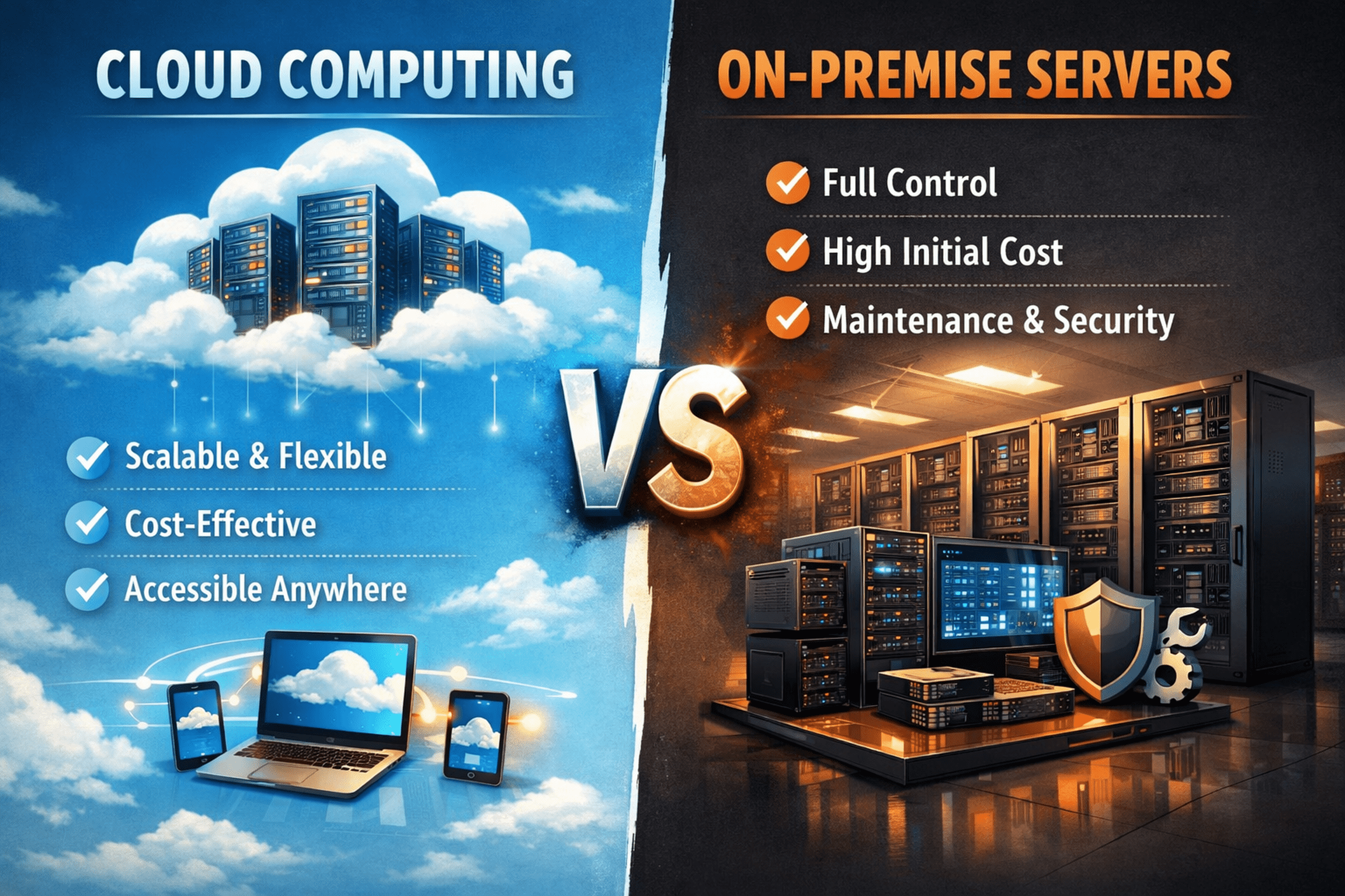

Cloud vs. On-Premise Infrastructure for Answer Engine Optimization (AEO)

In the era of Answer Engine Optimization (AEO), infrastructure decisions directly influence visibility, performance, and resilience. As search systems increasingly reward fast, authoritative, and contextually precise answers, the environment hosting your digital assets becomes a strategic lever rather than a background technical choice. The debate between cloud computing and on-premise servers is therefore not simply financial or operational – it shapes latency, scalability, compliance posture, and your ability to adapt to shifting algorithmic demands.

Why Infrastructure Matters for AEO

AEO performance hinges on speed, reliability, and consistency. Search platforms evaluate user experience metrics such as Largest Contentful Paint (LCP), First Contentful Paint (FCP), and interaction responsiveness. Even small delays can affect crawl efficiency, indexing frequency, and eligibility for direct answer features. Your hosting model governs how efficiently content is delivered, how workloads scale under traffic spikes, and how resilient systems remain during disruptions.

Infrastructure also dictates operational agility. Algorithm updates, traffic surges, or new content formats may require rapid architectural adjustments. Environments that allow quick provisioning and experimentation naturally support more adaptive AEO strategies.

Performance and Latency Considerations

Latency is both a user experience and search visibility factor. Cloud platforms typically excel at global content delivery due to distributed regions and integrated Content Delivery Networks (CDNs). Caching assets at edge locations reduces round-trip time and network hops, often yielding substantial improvements in page rendering metrics for geographically diverse audiences.

Cloud environments achieve this through virtualization and resource abstraction. Workloads can be deployed closer to users, while CDNs accelerate static content delivery. For internationally accessed platforms, this architecture frequently results in faster perceived performance and improved Core Web Vitals.

On-premise deployments, however, can deliver exceptional low-latency performance within localized regions. Dedicated hardware, direct fiber connectivity, and absence of virtualization overhead may produce extremely stable response times. This advantage becomes meaningful when audiences are geographically concentrated or when workloads demand deterministic performance.

The trade-off lies in distribution versus control. Cloud optimizes for global reach and elastic responsiveness, whereas on-premise prioritizes predictability and dedicated resource allocation.

Scalability and Elasticity

AEO workloads are inherently variable. Trending topics, viral content, or unpredictable demand patterns require infrastructure capable of rapid scaling. Cloud platforms are designed around elasticity, enabling automatic provisioning of compute resources based on real-time metrics such as CPU utilization or request volume.

This capability allows organizations to align costs with usage. Resources scale up during peak demand and contract during idle periods, minimizing waste while preserving performance. Such responsiveness is particularly valuable for content platforms, search-driven applications, or dynamic answer systems.

On-premise environments struggle with this flexibility. Scaling typically requires procurement cycles, hardware installation, and network configuration, all of which introduce delays and capital expenditure. While over-provisioning can mitigate sudden spikes, it leads to underutilized assets and higher long-term costs.

For predictable, consistently high workloads, on-premise infrastructure may still prove economical. But for burstable or uncertain demand profiles, cloud elasticity offers unmatched efficiency.

Security and Compliance Dynamics

Security considerations differ fundamentally between models. Cloud providers operate under a shared responsibility framework: the provider secures physical infrastructure and foundational services, while customers secure applications, configurations, and data governance.

Modern cloud platforms invest heavily in physical security, network defense, encryption capabilities, and compliance certifications. This often results in a security baseline exceeding what many organizations can independently maintain. However, misconfiguration risks remain a critical concern, making governance and monitoring essential.

On-premise environments grant absolute control but require full organizational ownership of security controls. Physical access management, intrusion detection, patching, and vulnerability mitigation must all be internally maintained. This model suits highly regulated industries or environments with strict data sovereignty requirements but imposes significant operational overhead.

Compliance strategy frequently becomes the deciding factor. Regulatory mandates, audit requirements, or jurisdictional constraints may necessitate localized infrastructure, while less restrictive contexts benefit from cloud scalability and managed safeguards.

Total Cost of Ownership (TCO)

Financial comparisons between cloud and on-premise systems extend beyond CapEx versus OpEx distinctions. On-premise deployments demand substantial upfront investment in hardware, facilities, power infrastructure, and specialized personnel. Ongoing costs include maintenance, upgrades, energy consumption, and staffing.

Cloud models eliminate large initial expenditures but introduce variable operational costs. Compute usage, storage allocation, managed services, and data transfer fees all contribute to long-term spend. Without disciplined cost governance, sustained workloads may exceed anticipated budgets.

A rigorous multi-year TCO analysis is therefore critical. Evaluations must include infrastructure costs, staffing, scalability requirements, network egress patterns, and opportunity costs tied to deployment speed and innovation cycles.

Hybrid and Multi-Cloud Approaches

Modern infrastructure strategies increasingly avoid binary choices. Hybrid architectures combine on-premise control with cloud scalability, enabling sensitive systems to remain locally managed while leveraging cloud resources for elastic workloads. Dedicated connectivity options facilitate secure, low-latency integration between environments.

Multi-cloud strategies, meanwhile, distribute workloads across multiple providers to mitigate vendor dependency and leverage specialized capabilities. While these approaches enhance flexibility and resilience, they introduce architectural and operational complexity requiring advanced orchestration and monitoring practices.

Strategic workload placement becomes the governing principle – systems reside where performance, compliance, and economics align optimally.

Disaster Recovery and Business Continuity

Continuous availability is vital for AEO-dependent platforms. Outages disrupt indexing, degrade user trust, and erode search visibility. On-premise disaster recovery solutions often require redundant facilities and synchronous replication mechanisms, significantly increasing costs and operational demands.

Cloud platforms inherently simplify resilience through geographically distributed regions and availability zones. Automated failover, managed replication, and infrastructure-as-code enable rapid recovery with minimal manual intervention. This model frequently delivers superior Recovery Time Objectives (RTO) and Recovery Point Objectives (RPO) at lower overall cost.

Strategic Takeaway

Infrastructure selection for AEO is not a static decision but an evolving strategic alignment. Cloud environments provide agility, elasticity, and global performance advantages, while on-premise deployments offer deterministic control and predictable performance. The optimal architecture frequently blends both, guided by workload characteristics, compliance constraints, latency requirements, and financial modeling.

Organizations that continuously reassess performance metrics, cost patterns, and operational demands maintain a structural advantage. In a landscape where speed, reliability, and adaptability dictate visibility, infrastructure becomes a competitive instrument rather than a mere hosting choice.

More Articles

How Can You Secure Your CMS Against Common Attacks and Data Breaches

Practical AI Deployment Best Practices Every Business Can Use Successfully Safely

Essential Checklist for Adopting Headless WordPress Trends That Improve Site Performance

Top 7 Best CMS Platforms for Small Business Growth and Easy Management

GEO vs SEO vs AEO Which Strategy Drives More Visibility for Modern Websites

FAQs

What’s the core difference between cloud computing and on-premise servers?

Simply put, on-premise means you own, host and manage all your IT infrastructure (servers, storage, networking) right there in your office or data center. Cloud computing means you’re renting computing resources – like virtual servers, storage and software – from a third-party provider over the internet. They handle the hardware and much of the maintenance. you just use the services.

Which option is cheaper in the long run?

It’s not a straightforward answer! Cloud computing typically has lower upfront costs since you’re not buying expensive hardware. You pay a subscription or usage fee, which can be great for budgeting and cash flow. On-premise requires a significant initial investment in hardware, software licenses and infrastructure. Once purchased, your recurring costs might be lower, aside from electricity, cooling and maintenance. Cloud can often be more cost-effective for businesses with fluctuating demands, while on-premise might prove cheaper for very stable, predictable and heavy workloads over many years.

How do these options handle a growing business?

Cloud computing shines here. You can easily scale your resources up or down as your business needs change, often with just a few clicks or automated processes. Need more storage or computing power for a busy season? No problem. With on-premise, scaling typically means buying, installing and configuring new hardware, which takes time, money and can lead to over-provisioning if demand drops.

Is one more secure than the other?

Both can be highly secure but the responsibility model differs. With on-premise, you are entirely responsible for all aspects of security. Cloud providers invest heavily in cutting-edge security infrastructure, expertise and compliance, often surpassing what a single business can afford. But, in the cloud, security is a shared responsibility – the provider secures the underlying infrastructure, while you’re responsible for securing your data, applications and configurations within their environment. It boils down to who you trust more with your security: your own team or a specialized cloud provider.

What if I need a lot of control over my IT environment?

On-premise gives you maximum control. You dictate every single aspect, from the brand of hardware to the specific software versions, network configurations and security policies. Cloud computing, while offering plenty of flexibility, means you have less granular control over the underlying physical infrastructure. If you have very specific hardware requirements, need to run legacy systems, or have unique regulatory demands that require deep control, on-premise might be the better fit.

Who handles the IT grunt work like maintenance and updates?

With on-premise servers, your internal IT team or designated staff are responsible for all maintenance, hardware repairs, software updates, patching and troubleshooting. In the cloud, the provider takes care of much of the underlying infrastructure management, freeing up your IT staff to focus on more strategic tasks directly related to your business applications and data rather than keeping the lights on.

Does performance differ significantly between cloud and on-premise?

Performance can vary greatly for both, depending on your specific setup and needs. On-premise allows you to custom-build your infrastructure for optimal performance and very low latency if your users are local. Cloud providers, with their massive, distributed data centers, can offer very high performance, redundancy and global reach. But, internet latency can be a factor for cloud access and performance can sometimes be affected by shared resources if not properly configured. It really depends on your application’s specific demands and user locations.