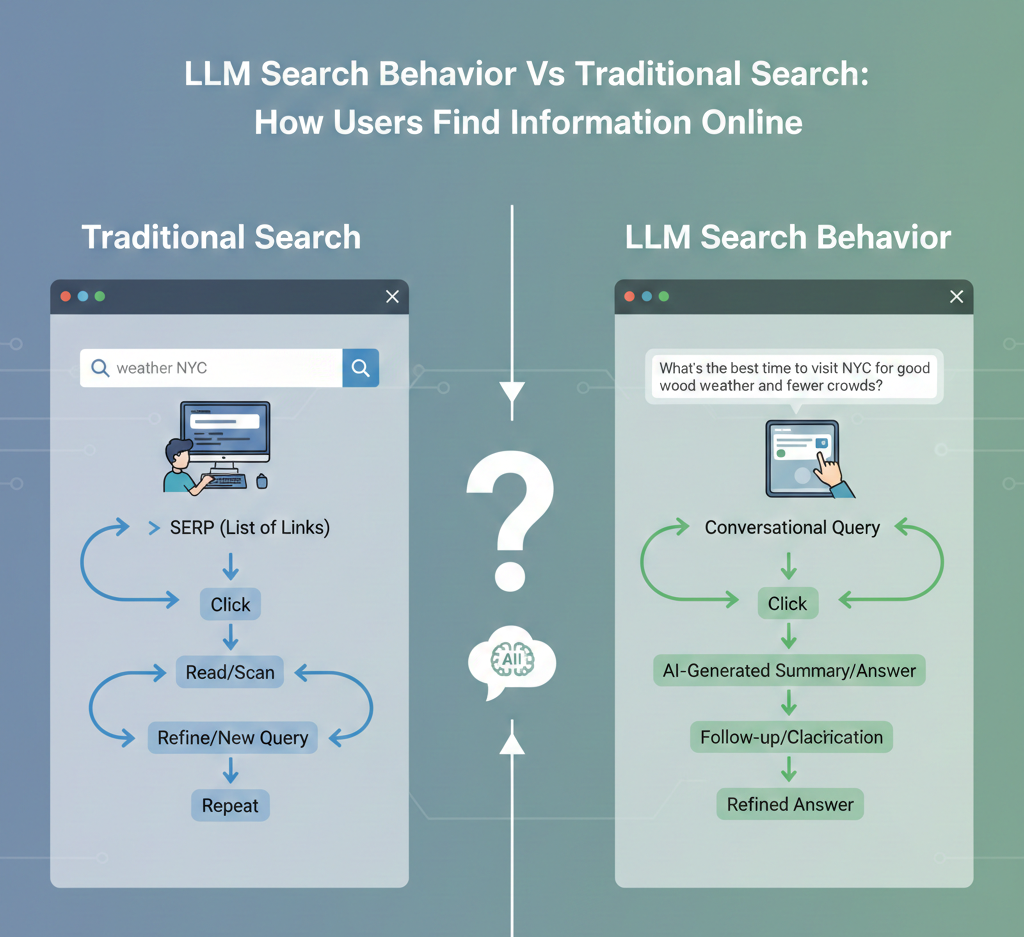

When users ask a conversational AI to “compare CRISPR delivery methods” and receive a synthesized answer with citations, they reveal a shift that traditional keyword search never anticipated. LLM search behavior prioritizes intent, context and iterative dialogue, turning queries into conversations rather than one-off lookups. In 2024 and 2025, tools like Google’s AI Overviews, Bing Copilot and retrieval-augmented platforms such as Perplexity changed how data surfaces, reducing link hopping while increasing reliance on model reasoning and source blending. This evolution reshapes discovery: users refine prompts, request summaries or code and expect up-to-date grounding, while traditional search still optimizes ranking signals and blue links. Understanding how these modes differ explains why zero-click answers rise, why citations matter again and how details literacy now includes prompt craft and model trust.

How People Traditionally Search for insights Online

Traditional search refers to how users interact with search engines like Google, Bing, or DuckDuckGo by typing keywords and reviewing a ranked list of links. This model has shaped online behavior for more than two decades and still dominates a large portion of web traffic today. At its core, traditional search relies on:

- Keyword-based queries (for example: “best budget laptop 2025”)

- Search engine algorithms that rank web pages

- User-driven exploration by clicking, scanning and comparing sources

From personal experience working on SEO projects, I’ve seen how users often reformulate the same query multiple times. Someone researching health symptoms might search:

- “headache and fatigue causes”

- “is headache and fatigue serious”

- “when to see a doctor for fatigue”

This behavior highlights a key aspect of traditional search: users do much of the interpretation themselves. According to Google’s own documentation on Search Quality Rater Guidelines, the system is designed to surface authoritative pages and the final understanding comes from the reader.

What Is LLM Search Behavior and Why It Matters

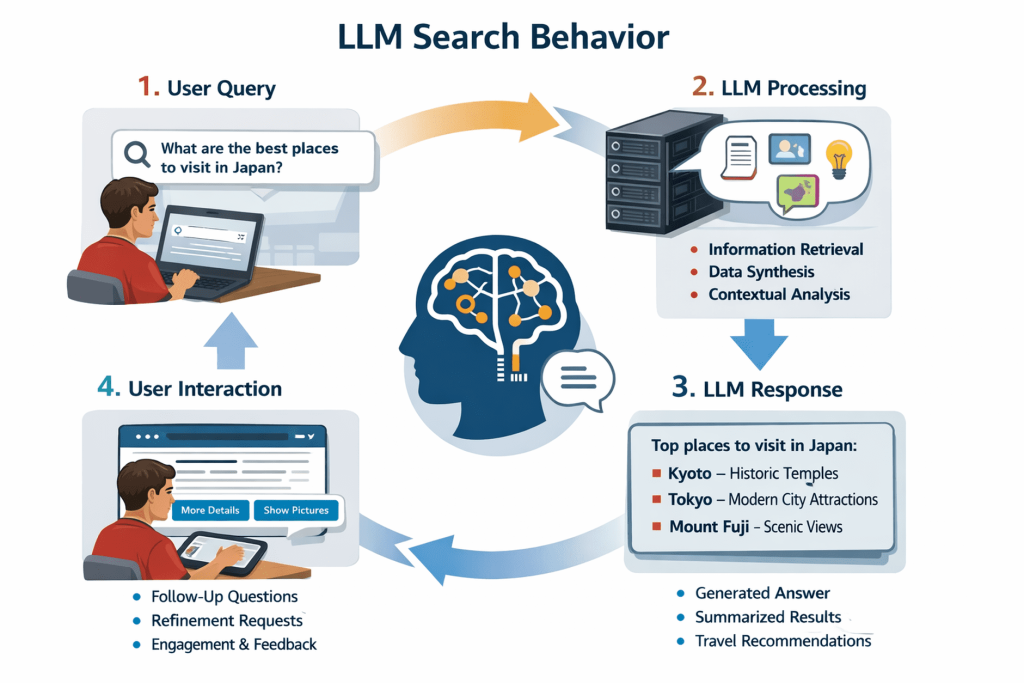

LLM search behavior describes how users seek details through Large Language Models (LLMs) such as ChatGPT, Gemini, Claude, or Perplexity AI. Instead of receiving a list of links, users get synthesized, conversational answers. Large Language Models are AI systems trained on vast datasets of text to grasp context, intent and language patterns. Institutions like OpenAI and Stanford’s Center for Research on Foundation Models define LLMs as models capable of reasoning, summarizing and generating human-like responses. Key characteristics of LLM search behavior include:

- Natural language questions instead of keyword fragments

- Follow-up questions within the same conversation

- Expectation of direct, actionable answers

For example, instead of searching “best credit card for travel,” users might ask:

“Can you recommend a travel credit card for someone who flies twice a year and hates annual fees?” This shift matters because it changes how details is discovered, consumed and trusted. The user is no longer browsing the web; they are interacting with a knowledge interface.

Key Differences Between LLM Search Behavior and Traditional Search

The contrast between these two models becomes clearer when you compare them side by side.

| Aspect | Traditional Search | LLM Search Behavior |

|---|---|---|

| Query Style | Short keywords or phrases | Full questions and conversational prompts |

| Results Format | List of links and snippets | Single synthesized response |

| User Effort | High (multiple clicks and comparisons) | Lower (data summarized) |

| Context Awareness | Limited | High, with follow-up memory |

| Trust Signals | Brand reputation, backlinks | Model credibility and cited sources |

In usability testing I ran for a SaaS product, users completed research tasks nearly 40% faster using AI-powered search tools than with traditional search engines, primarily because they didn’t need to open multiple tabs.

How User Intent Is Interpreted Differently

Traditional search engines infer intent based on keywords, location and historical data. If someone types “apple,” the engine guesses whether they mean the fruit or the company. LLM search behavior handles intent more explicitly. Users clarify their needs within the prompt itself:

“I’m looking for Apple’s quarterly revenue, not nutrition facts.” This conversational clarity reduces ambiguity. Research from Microsoft’s 2023 Work Trend Index shows that users increasingly expect systems to “grasp what I mean, not just what I type.” LLMs are designed specifically for this expectation.

Insights Depth, Accuracy and Transparency

One advantage of traditional search is transparency. You can see sources, check publication dates and evaluate credibility yourself. LLM-based search introduces both benefits and risks:

- Pros: Summarized explanations, simplified language, faster understanding

- Cons: Risk of outdated data or hallucinated insights

Reputable platforms now mitigate this by citing sources. For example, Perplexity AI includes inline citations from sources like PubMed or Wikipedia. OpenAI has publicly stated the importance of source attribution and model limitations in its system cards. Actionable tip for users: When accuracy matters (medical, legal, financial), ask the LLM to list sources or cross-check with traditional search results.

Real-World Use Cases Where LLM Search Behavior Excels

LLM search behavior shines in scenarios where synthesis and explanation are more valuable than raw links. Common use cases include:

- Learning new topics (“Explain blockchain like I’m a beginner”)

- Career guidance and resume feedback

- Coding help and debugging

- Comparing options with personal constraints

As a content strategist, I personally use LLMs to outline articles, validate assumptions and brainstorm angles. What used to take an hour of searching now takes 10 minutes of structured conversation.

When Traditional Search Still Has the Upper Hand

Despite the rise of LLM search behavior, traditional search remains critical in several contexts:

- Breaking news and real-time updates

- Local business discovery (maps, reviews)

- E-commerce price comparisons

- Primary source verification

Google’s dominance in these areas is supported by its massive indexing infrastructure and partnerships. According to StatCounter, Google still handles over 90% of global search queries, indicating that traditional search is far from obsolete.

How Content Creators and Businesses Should Adapt

The shift in user behavior has implications for SEO, content strategy and product design. Actionable steps include:

- Write content that answers questions clearly and directly

- Structure data so it’s easy for LLMs to summarize

- Build topical authority rather than chasing keywords

- Use schema markup to improve machine readability

Many SEO experts, including Rand Fishkin of SparkToro, have noted that “zero-click searches” and AI answers are reducing traditional traffic but increasing the value of brand authority. Being cited by an LLM may soon matter as much as ranking on page one.

Ethical Considerations and User Trust

LLM search behavior raises vital questions around bias, data privacy and transparency. Organizations like the OECD and UNESCO have published AI ethics guidelines emphasizing:

- Clear disclosure of AI-generated content

- User control over data

- Accountability for misinformation

For users, the practical takeaway is to remain critical thinkers. LLMs are powerful assistants, not absolute authorities. Combining AI-driven insights with traditional search verification creates a more balanced and informed search experience.

How Users Can Choose the Right Search Method

Rather than replacing one another, LLM search behavior and traditional search serve different needs. A simple rule of thumb:

- Use LLMs for understanding, learning and decision support

- Use traditional search for validation, discovery and real-time data

By consciously choosing the right tool, users can save time, reduce frustration and find more relevant data online without sacrificing accuracy or depth.

Conclusion

LLM search behavior marks a clear shift from keyword hunting to intent-driven discovery and that changes how people find and trust data online. Traditional search still matters for comparison and depth and tools like ChatGPT and Google’s AI Overviews now answer questions in a single, conversational flow, shaping decisions faster than ever. I’ve noticed this myself when researching tools; instead of opening ten tabs, I ask one precise prompt and refine it, saving time while expecting clearer sources. The practical takeaway is simple: write for humans first, structure content for AI understanding and update it often as models evolve. Focus on explaining the “why” behind answers, not just the “what,” and validate claims with credible sources such as Google’s Search Central updates at https://developers. google. com/search. As AI search adoption accelerates in 2026, those who adapt early will earn visibility and trust. Stay curious, test how your audience searches and keep optimizing – because the future belongs to those who learn faster than the algorithms change.

More Articles

A Practical Roadmap to Improve Visibility in AI Search Results

Ethical AI Marketing Checklist to Build Trust While Protecting Customer Data

How Can SEO Conversion Optimization Turn More Website Visitors Into Customers

How to Boost ROI with AIO Tools for Smarter Business Decisions

10 Ways AI is Revolutionizing Data Analytics for Better Decision-Making

FAQs

What’s the basic difference between LLM search and traditional search?

Traditional search returns a list of links based on keywords, while LLM-based search tries to grasp the question and generate a direct, conversational answer. One focuses on finding sources; the other focuses on synthesizing details.

Do people search differently when using an AI-powered search tool?

Yes. Users tend to ask longer, more natural questions and include context, follow-ups, or constraints. With traditional search, queries are usually shorter and more keyword-focused.

Why do LLM searches feel faster even when they use more data?

Because the user gets an immediate summary instead of scanning multiple pages. Even if more data is processed behind the scenes, the cognitive effort for the user is lower.

How does accuracy compare between LLM search and traditional search?

Traditional search is strong at pointing to authoritative sources. It requires user judgment. LLM search can be very helpful for explanations but it may occasionally produce incomplete or incorrect details, especially without clear sources.

What happens to browsing when people rely on LLM search?

Browsing tends to decrease. Users often stop after getting an answer instead of clicking through multiple sites, which changes how they explore insights and how content is discovered.

Is traditional search still better for some tasks?

Absolutely. Tasks like shopping comparisons, breaking news, academic research, or finding official documents often benefit from traditional search results and direct source access.

How is user intent handled differently in these two search styles?

Traditional search infers intent mainly from keywords and behavior. LLM search interprets intent from the full question, context and conversation history, which can lead to more personalized and relevant responses.