The Evolution of Search in the Age of Generative AI

Digital search is undergoing a structural transformation. Traditional engines were designed to match keywords and rank pages. Modern systems powered by generative AI now interpret intent, understand context, and synthesize direct answers. This shift fundamentally changes how users interact with information and how creators must approach Answer Engine Optimization (AEO).

Generative AI search systems rely on Large Language Models (LLMs) capable of processing natural language with high semantic awareness. Instead of returning a list of links, these systems often deliver complete responses directly within the interface. For content strategists, visibility alone is no longer sufficient. Content must be structured to become a trusted answer source.

From Keyword Matching to Conceptual Understanding

Earlier search algorithms primarily evaluated lexical signals: keyword frequency, backlinks, and metadata. While effective, they struggled with nuance and complex queries. Generative AI search replaces rigid matching with conceptual comprehension.

When a user asks a detailed question, the engine analyzes meaning rather than isolated terms. A query such as:

“Compare the energy efficiency of heat pumps and furnaces in cold climates”

is interpreted as a request for comparative reasoning, not merely pages containing those words. The system identifies concepts, retrieves relevant knowledge, and produces a synthesized answer.

For AEO practitioners, optimization must move beyond keyword density toward:

- Concept coverage

- Semantic clarity

- Logical information structure

- Explicit relationships between ideas

Content is now evaluated based on how effectively it supports reasoning and answer construction.

Semantic Models and Entity Recognition

Generative search systems derive intelligence from transformer-based architectures such as BERT, T5, and various GPT models. These architectures use self-attention mechanisms to understand context across entire passages rather than individual phrases.

This enables entity recognition – the identification of meaningful objects within text:

- People

- Organizations

- Products

- Locations

- Events

- Concepts

For example, a discussion of an entrepreneur and a technology platform is not treated as separate keywords. The system maps relationships, attributes, and contextual associations.

Effective AEO content therefore:

- Clearly defines entities

- Explains relationships

- Provides contextual depth

- Uses consistent terminology

A well-optimized article implicitly builds a mini knowledge graph that AI systems can easily interpret and reuse.

Retrieval-Augmented Generation (RAG)

Pure LLMs can generate fluent language but may produce inaccuracies. Retrieval-Augmented Generation (RAG) mitigates this by combining generative reasoning with external knowledge retrieval.

How RAG Works

- The system converts queries into vector embeddings

- It retrieves semantically similar content chunks from indexed sources

- Retrieved passages are supplied to the LLM as grounding context

- The model generates a response constrained by retrieved data

This approach significantly improves factual reliability and reduces hallucinations.

Implications for AEO

Content must be designed for machine retrieval:

- Modular sections

- Concise answer-focused passages

- Clear headings

- Self-contained explanations

Dense, unstructured prose reduces the probability of accurate retrieval. Atomic, logically segmented information improves AI usability.

Structured Data as a Communication Layer

While LLMs understand natural language, structured data provides explicit semantic guidance. Schema markup functions as a standardized vocabulary describing what content represents.

Structured data helps AI systems identify:

- Entity types

- Attributes

- Relationships

- Key properties

For instance, marking up a product comparison using Schema.org allows engines to extract precise details rather than infer meaning probabilistically.

Benefits for Generative AI Search

Structured data enhances:

- Answer precision

- Knowledge panel inclusion

- Confidence in fact extraction

- Eligibility for rich results

Formats such as FAQPage schema are particularly valuable because they mirror direct-answer structures used by AI interfaces.

Designing Content Architectures for AI Comprehension

Generative AI search favors interconnected knowledge structures rather than isolated pages. Content strategies must evolve toward semantic ecosystems.

Topic Clusters and Pillar Models

A pillar page provides broad coverage of a subject, while cluster pages address specific subtopics. This architecture:

- Reinforces domain authority

- Clarifies conceptual boundaries

- Improves retrieval pathways

- Assists AI knowledge mapping

Internal linking is no longer only an SEO tactic. It becomes a mechanism guiding AI interpretation.

Governance Considerations

Complex architectures require:

- Consistent terminology

- Regular updates

- Avoidance of semantic overlap

- Careful topic mapping

Quality, coherence, and structural integrity are critical.

Personalization and Contextual Relevance

Modern AI systems increasingly tailor responses using contextual signals:

- Prior behavior

- Location

- Query patterns

- Interaction history

This means content relevance is dynamic. Generic material competes poorly against highly contextual resources.

Strategic Adjustments for AEO

- Develop audience-specific content

- Address contextual variations of queries

- Include explicit situational framing

- Strengthen geo-relevance when applicable

Precision improves engagement and increases the likelihood of selection by AI systems.

Measuring Performance in a Direct-Answer Environment

Traditional SEO metrics remain useful but insufficient. Generative AI search often reduces clicks even when content is influential.

Emerging Evaluation Priorities

- Presence in direct-answer interfaces

- Inclusion in AI summaries

- Entity authority recognition

- Query coverage breadth

- Brand visibility effects

Search Console data can provide indirect signals, but deeper SERP feature monitoring is often necessary.

Experimental Approaches

Advanced practitioners may:

- Test content against LLM-based QA systems

- Evaluate semantic clarity

- Compare entity density

- A/B test structural variations

Optimization becomes iterative and research-driven.

Trade-Offs and Ethical Considerations

Generative AI introduces new challenges that content creators must address thoughtfully.

Hallucination Risks

AI systems may generate incorrect responses if retrieval context is weak. Content should therefore emphasize:

- Precision

- Internal consistency

- Clear definitions

- Reinforcement of key facts

Bias and Representation

Training data biases can propagate into AI-generated outputs. Ethical AEO demands:

- Inclusive language

- Balanced perspectives

- Avoidance of stereotypes

- Responsible framing

Monetization Shifts

Direct-answer interfaces may reduce traffic. Sustainable strategies often involve:

- Depth beyond basic answers

- Unique insights

- Interactive value

- Brand authority building

Long-term success depends on differentiation rather than volume.

Strategic Outlook for AEO

Generative AI search rewards clarity, structure, and authority. Content that is easy for machines to interpret, retrieve, and synthesize will dominate visibility.

Key priorities moving forward:

- Optimize for concepts, not just keywords

- Design modular, answer-centric content

- Use structured data deliberately

- Build coherent semantic architectures

- Monitor AI-driven SERP behaviors

- Emphasize accuracy and trust

The competitive advantage lies in aligning human knowledge design with machine reasoning capabilities.

More Articles

How to Improve AI Search Visibility for Your Website Without Technical Complexity

The Complete Roadmap to Building a Thriving Content Marketing Strategy

Uncover Winning Keywords 7 Essential Strategies for Better Search Rankings

Your Step-by-Step Roadmap to Building a Winning Content Strategy

A Practical Roadmap to Privacy Compliant Analytics Without Sacrificing Business Insights

FAQs

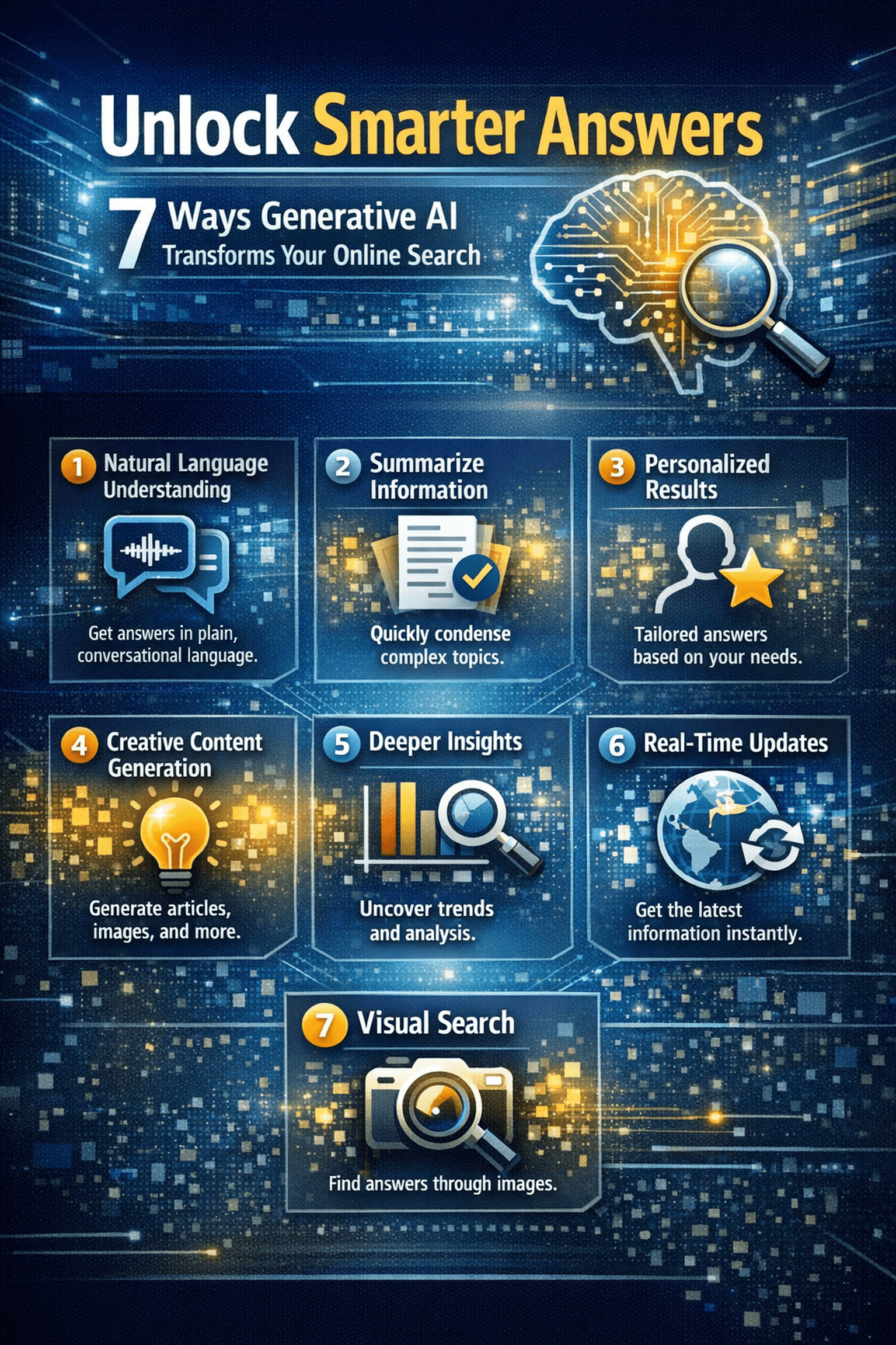

What’s the big deal about generative AI in online search?

Generative AI is changing search from just giving you a list of links to actually providing direct, synthesized answers. Instead of sifting through pages, you get summaries, insights and even newly generated content that directly addresses your query.

How does generative AI make my search results ‘smarter’?

It understands the intent behind your query much better. It can pull data from various sources, summarize complex topics and even create new content (like a paragraph explanation) that directly answers your question, rather than just showing you documents that might contain the answer.

Can it really summarize web pages for me?

Absolutely! One of its superpowers is to distill long articles or multiple search results into concise summaries, saving you a ton of time and helping you grasp the main points quickly without having to read everything.

What if I have a really complicated question? Will it still help?

Yes, especially then! Generative AI excels at handling complex, multi-part, or conversational queries. It can break down your question, find relevant pieces of data and then synthesize them into a coherent answer, something traditional search engines often struggled with.

Is it just for factual questions, or can it help with more creative stuff too?

While it’s fantastic for facts, it goes beyond. It can help brainstorm ideas, provide different perspectives, or even generate creative content based on your search, making it useful for both research and more open-ended inquiries.

How does this save me time when I’m searching online?

By giving you direct answers, concise summaries and synthesized insights upfront, it significantly reduces the need to click through multiple links, read lengthy articles and piece together data yourself. You get to the core of what you’re looking for much faster.

Can I have a conversation with the search engine now?

In many ways, yes! Generative AI enables a much more interactive search experience. You can ask follow-up questions, refine your query based on the initial answer and have a more dynamic dialogue to get precisely the details you need.